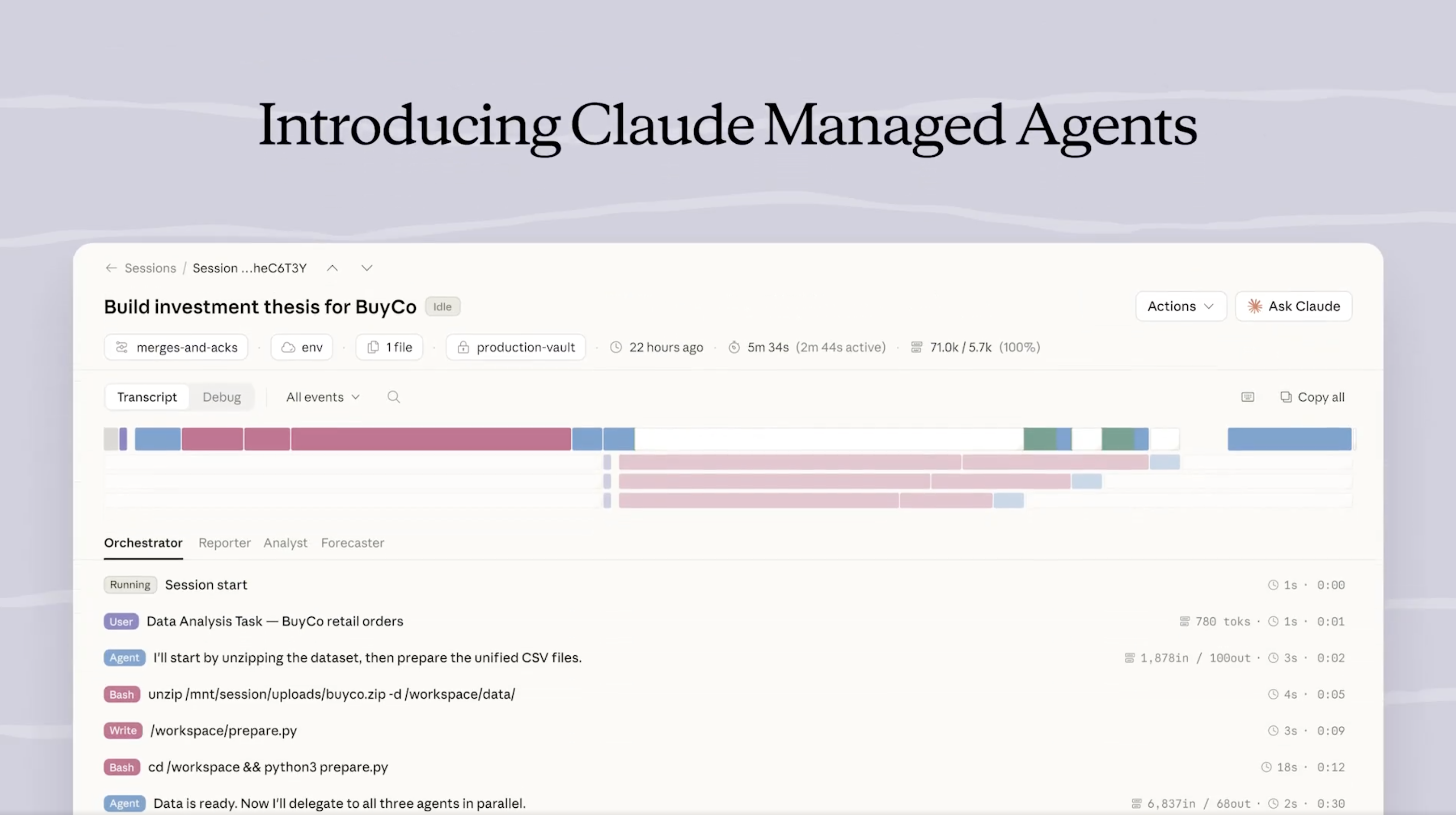

Anthropic launched Claude Managed Agents in public beta on April 8, 2026. The release notes describe a managed agent harness with secure sandboxing, built-in tools, and Server-Sent Events (SSE) streaming. The quickstart introduces three new API surfaces - /v1/agents, /v1/environments, and /v1/sessions - and requires a beta header (managed-agents-2026-04-01) during the beta period.

The short version: Anthropic is no longer just selling you a model. They are selling you a runtime for agents built on that model. That distinction matters more than the launch itself - and it is where the long-term story lives. If you have been following how ChatGPT citation behavior has shifted with GPT-5.3 and GPT-5.4, this is the same competitive dynamic playing out on the agent side of the stack.

What Claude Managed Agents actually is

Strip the marketing layer and what you get is an opinionated agent execution environment. You define an agent (the reasoning brain), an environment (the sandboxed place it runs in, with pre-wired tools), and a session (a conversation or task instance). Anthropic handles the provisioning, the tool execution loop, the event streaming, and the cleanup.

This is not a new SDK wrapper on top of the Messages API. It is a new class of endpoints that treat agents as long-running, stateful, observable objects. If you have ever built an agent from scratch - configured a tool schema, wired up a tool-call loop, managed conversation state across turns, worried about runaway costs, and built your own streaming layer on top - you already know which 70% of the engineering work this abstracts away.

The public beta ships with built-in tools (execution sandboxes, file operations, HTTP access, and other primitives Anthropic has been quietly productionizing for a year) and gives developers a single endpoint to create an agent, spin up an environment, and stream events back in real time.

What the quickstart reveals about Anthropic's architecture

The quickstart is the more interesting artifact than the announcement. Three things stand out.

First, the object model is explicit. Agents, environments, and sessions are three distinct concepts. That is a deliberate architectural choice. It means Anthropic is separating what an agent is (its prompt, its tool access, its policy) from where it runs (the sandboxed execution context) from when it runs (a specific invocation with its own state). Most DIY agent frameworks collapse these into a single runtime object. Separating them lets you reuse agents across environments, rotate environments without touching agents, and retain sessions for audit.

Second, streaming is a first-class concern. SSE is the default delivery mechanism for events. This tells you Anthropic expects agents to run for seconds to minutes, not single-shot completions. Long-running execution is the hard part of productionizing agents, and the API surface reflects that reality.

Third, the beta header is load-bearing. The fact that every request needs managed-agents-2026-04-01 is not just versioning hygiene. It signals that the surface area will change, and Anthropic wants an explicit opt-in per integration. Expect the object model to tighten over the next few months.

Why this matters for teams building agent products

Most production agent systems today are built on a messy stack: a model provider (OpenAI, Anthropic, Google), a framework (LangChain, LlamaIndex, Inngest, or something home-rolled), a sandbox for tool execution (E2B, Modal, custom Docker), an observability layer (LangSmith, Helicone, Phoenix), and a backend that glues it together. Teams spend real engineering cycles on the plumbing: tool execution sandboxes, session persistence, concurrent agent isolation, retry logic, cost controls, and streaming pipelines.

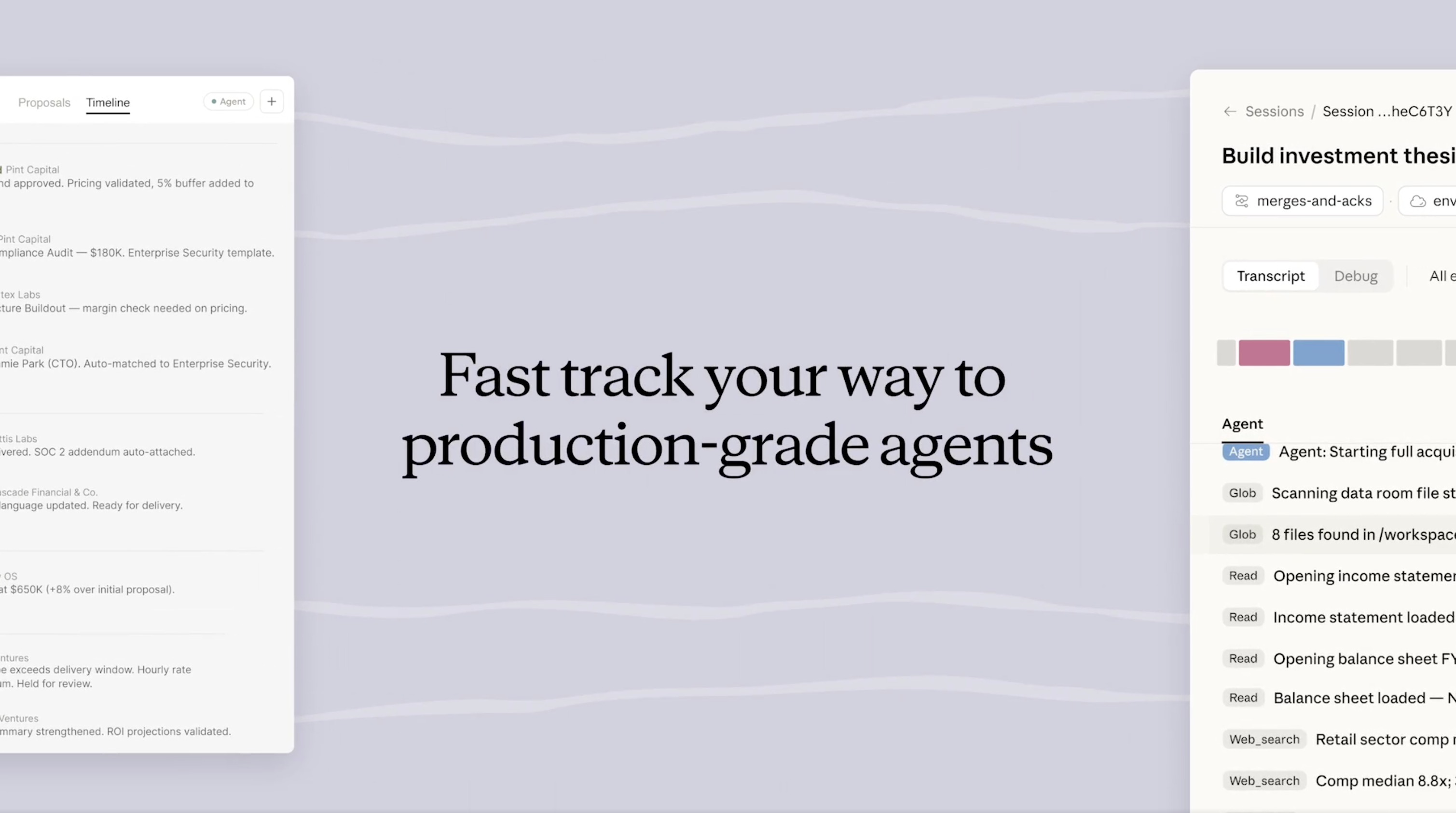

Claude Managed Agents is Anthropic saying: you should not have to build any of that. Bring your agent definition and your tool schema; we will run it, isolate it, stream events back to you, and hand you a session you can query later.

For smaller teams, this is a genuine unlock. The engineering cost of "reliable agent infrastructure" drops from weeks to hours. For larger teams already running fleets of agents on custom infra, the calculus is different - they have already paid the tax, and they have their own opinions about isolation, observability, and cost control. They will watch the beta carefully but not migrate tomorrow.

Where it fits vs DIY agent stacks

The honest comparison is not "Claude Managed Agents vs LangChain." It is "do you need your own runtime, or can you rent Anthropic's?"

Rent it when: You are building a product where Claude is the reasoning layer and you want to ship agents fast. You are early. You do not have strong opinions about tool execution, session storage, or streaming. You trust Anthropic on reliability and do not need to host execution in your own VPC.

Build it yourself when: You need multi-model orchestration (Claude, GPT, Gemini, open-source models running side by side). You have regulatory or data residency requirements that forbid sending tool inputs to a vendor-managed sandbox. You want deep observability and custom cost controls. You already have a working agent runtime and migrating is more expensive than maintaining.

This is the same tradeoff every managed-vs-self-hosted decision comes down to. Anthropic is betting that for the majority of new agent projects, the answer will be "rent it."

The strategic story: Anthropic moves up the stack

This is the real story and it has almost nothing to do with the API surface. Anthropic is moving from model provider to agent runtime provider. That is a different business.

As a model provider, Anthropic competes on intelligence per dollar. The moat is the model. Customers can swap providers with a config change.

As an agent runtime provider, Anthropic competes on reliability, tooling, and integration. The moat is the platform. Customers who build on /v1/agents and /v1/sessions cannot swap providers with a config change - they would need to rebuild their entire agent lifecycle layer. The switching cost goes from low to meaningful.

This is the same playbook we saw with Vercel (hosting → frontend platform), Supabase (Postgres → backend platform), and AWS itself (servers → managed everything). Infrastructure primitives become products, and products become lock-in.

For Anthropic specifically, Managed Agents also solves a strategic problem: how do you stay relevant when GPT-5 and Gemini 2.5 Pro are competitive on benchmarks? You change the unit of comparison. You stop selling models and start selling agent outcomes. That is what this launch signals. We documented the same dynamic in our 2026 State of AI Search Visibility report: the lines between search, answer engines, and agents are collapsing fast.

Risks and limits to watch

A few things to keep in mind before you bet a product on this beta.

It is still beta. The beta header is a reminder that the API will change. Do not build a business-critical agent flow on this and expect zero migration work.

Vendor lock-in is real. Once you are writing to /v1/agents and assuming Anthropic's session model, moving off is non-trivial. That is the price of convenience.

Security boundaries are Anthropic's call, not yours. You are running tools inside Anthropic's sandboxes with Anthropic's isolation model. If you have opinions about egress filtering, secret management, or network policies, you are accepting whatever defaults Anthropic ships until they expose controls.

Observability is limited early. The SSE event stream is a start, but production agent operators usually want deeper traces, replay, and structured evals. Expect the observability story to evolve.

Cost visibility matters. Managed runtimes make it easy to accidentally spend. Understand how sessions, environments, and tool calls are billed before you scale.

What this means for GEO and AI visibility

For brands, marketers, and anyone who cares about AI search visibility, this launch reinforces a trend we have been tracking all year: the line between "AI search" and "AI agent" is dissolving. When an agent powered by Claude can autonomously browse the web, call tools, and make decisions, the question "does ChatGPT mention my brand?" becomes "does the agent recommend my brand, call my API, or cite my content as a source?"

That is a meaningfully harder visibility problem. You can read our analysis of 2.1M sources cited by AI search engines for the current state of which domains get pulled in, and our guide to running an AI visibility audit for a practical framework. As more agents run inside model-provider runtimes, optimizing for AI citation and agent discoverability becomes just as important as traditional search visibility.

If you are a developer building on Claude Managed Agents, the citation tracking problem does not go away just because the runtime is managed - it shifts. You now need to know which sources your own agent trusts, and whether the third-party brands your agent recommends are the ones your users expect.

The bottom line

Claude Managed Agents is not just an API launch. It is Anthropic repositioning from model provider to agent infrastructure provider - quietly moving the unit of competition from benchmark scores to agent outcomes. Developers get a real productivity win. Anthropic gets a stickier business. The agent stack gets one fewer reason to build from scratch.

If you are starting a new agent project today, it is worth a serious evaluation. If you already have production agents running on custom infra, treat this as a signal about where the market is heading rather than a migration target. Either way, the interesting question is not "did Anthropic ship a feature?" but "how many other labs will ship their own agent runtimes in the next six months?"

The answer is probably: all of them.

For brands thinking about AI visibility, this shift matters too. As more agents run directly inside model-provider runtimes, the distinction between "search" and "agent action" blurs further. Tracking how your brand is surfaced by ChatGPT, Claude, Perplexity, Google AI, and Gemini is no longer just about citations in answers - it is about which agents recommend you, which tools they call, and which sources they trust. That is the problem Geonimo is built to measure, and you can start a free trial or see our pricing if you want to track your brand's visibility across every major AI engine.